The Number Nobody Shows You

Ask ChatGPT to recommend a CRM. Write down the list. Ask again.

You'll likely get different brands back. Penn State researchers documented accuracy swings of 15% on identical prompts, even at temperature=0. Same input. Different output often enough to matter.

So when an AI visibility tool tells you "your brand visibility is 42%," the obvious question is: how many times did you ask? What's the margin of error? How confident are you in that number?

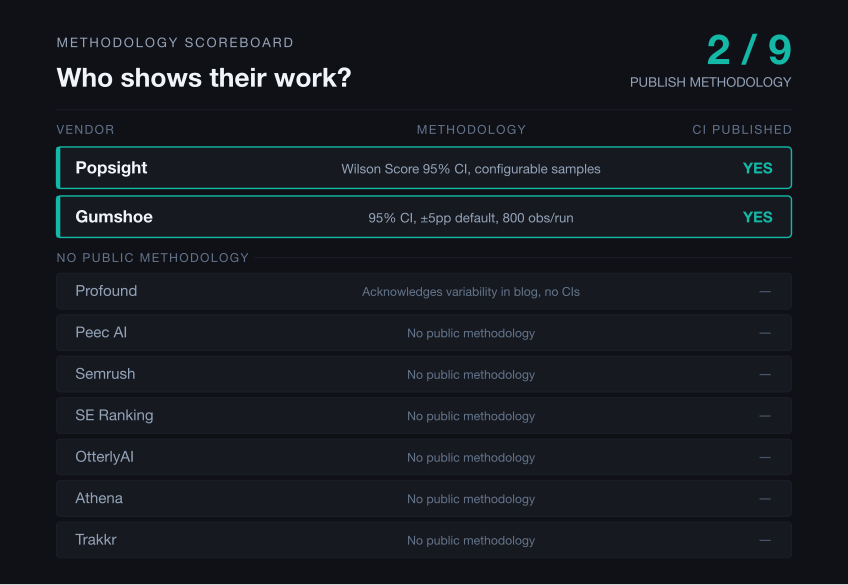

We reviewed eight competitors in 2026. One publicly documents confidence intervals. For the other seven, we found no public documentation of sample sizes or confidence intervals.

This is a methodology-first comparison. We built the table we wish existed when we started evaluating tools ourselves, using only public materials, with source links where public sources were available. We compete in this category (Popsight is our product), so verify anything that matters to you. Full audit sources and limitations appear in the appendices.

Methodology tells you whether to trust the score. Readiness and citation-health checks tell you what to fix when the score is low. This article covers both.

Key findings

- Among the eight competitors reviewed here, only Gumshoe publicly documents confidence intervals in the materials we found.

- Several tools now offer readiness audits, but the depth and public documentation vary.

- Popsight is positioned around local-first measurement plus diagnosis: bot access, CMP suppression, citation health, and Quick Wins.

- Provider count matters less than whether the provider matches your buyers' search behavior.

Jump to: What AI visibility tools measure · Why methodology matters · Buyer checklist · Comparison table · Beyond visibility tracking · Tool-by-tool breakdown · Methodology audit · Operational audit

What Is an AI Visibility Tool?

An AI visibility tool measures how often your brand appears in AI-generated answers. You give it prompts ("What's the best CRM for small businesses?"), it runs those prompts through ChatGPT, Claude, Gemini, Perplexity, and other AI products, then reports where and how often your brand shows up.

Traditional SEO tools start from pages and keywords. AI visibility tools start from prompts, generated answers, cited sources, and brand mentions inside those answers.

The category is young. Many vendors appear to have launched or repositioned around AI visibility in late 2024 and 2025. Roughly $90 million in venture funding has concentrated in three players: Profound has raised $58.5M total (Series B announcement), Peec AI has raised $29M total (Series A announcement), and Athena has raised $2.2M (seed announcement).

Pew Research found that users who encounter an AI summary click traditional search results 8% of the time, compared to 15% when no AI summary appears. Ahrefs measured a 58% lower click-through rate for pages where an AI Overview appears. ChatGPT alone has 900 million weekly active users. These studies suggest AI answer surfaces reduce downstream clicks from Google search. They do not prove AI mentions replace all brand discovery, but they make visibility in AI answers worth tracking.

For a deeper introduction to the category, see our guide to generative engine optimization (GEO).

Why Methodology Matters More Than Features

Most AI visibility tools report signal without quantifying the noise.

When you run a prompt once through ChatGPT and record whether your brand appears, you've taken a single sample from a highly variable distribution. That sample might be representative of what users typically see, or it might be an outlier. You have no way to know.

The tools that run multiple samples per prompt and compute confidence intervals can tell you: "Your brand appears 45% of the time, plus or minus 8 percentage points at 95% confidence." That's a range of 37% to 53%, wide but honest. The tools that run a single query give you "45%," full stop. That number could be 20% or 70% in reality, and you'd never know.

This matters when you're making decisions. If your brand visibility dropped from 45% to 38%, did something change? With confidence intervals, you can check whether those ranges overlap. If they do, the difference is probably noise. If they don't, something real happened. Without confidence intervals, you're guessing.

Of the eight competitors we reviewed, only Gumshoe publishes this methodology publicly. For the other seven, public materials emphasize point-estimate metrics, but we found no documentation of sample sizes or variance handling.

What to Look For in 2026

Before comparing specific tools, here's the buyer's checklist.

Methodology Transparency

Does the tool publish sample sizes and confidence intervals? Without them, a "45% visibility score" might actually be anywhere from 25% to 65%. This single filter separates measurement from guesswork.

Provider Coverage

For most buyers, ChatGPT and Perplexity should be baseline coverage. Perplexity matters because it surfaces citation-heavy answers for search-oriented queries, often capturing buyer-intent traffic that starts with "best X for Y" prompts. Claude, Gemini, Grok, DeepSeek, and Meta AI separate broad-coverage tools from limited ones. Watch for providers listed as "roadmap" or charged as add-ons.

Provider count is not provider importance. Weight engines by where your buyers actually ask questions. ChatGPT's scale makes ChatGPT coverage more valuable than adding long-tail engines.

Deployment Model

Cloud SaaS stores your prompts and results on vendor servers. Desktop tools keep everything local. If you have data residency requirements or run competitive intelligence, deployment model matters.

Pricing Structure

Entry tiers range from $0 (Trakkr Free) to $499/month (Profound Lite). Enterprise pricing is often reported around $2,000-$5,000+ per month. Some vendors hide prices behind sales calls.

BYO-API Keys

BYO-API (bring your own API keys) means you pay provider rates directly (roughly $0.01-0.03 per query). Vendor-proxy pricing can be higher than raw provider API costs, especially at low prompt volumes. One caveat: API responses may not match what users see in ChatGPT or Claude's web interface exactly.

Action, Not Just Monitoring

A visibility score tells you whether AI products mention your brand. It does not tell you why they don't.

Some tools appear to focus mainly on measurement. Others also connect measurement to fixes: crawler access checks, bot blocks, consent wall detection, schema gaps, cited-URL health, and content clarity. If your visibility is low, does the tool tell you what to change? Or does it leave you staring at a number?

Alerts and Integrations

Slack alerts when visibility drops. Looker Studio or GA integration. CSV exports. Multi-seat workspaces for agencies. Check whether these ship today or sit on a roadmap.

Security and Governance

Larger teams will ask about SOC 2 certification, SSO support, role-based access controls, and audit logs. Data retention policies matter if you're tracking competitive intelligence you don't want sitting on vendor servers indefinitely. Check whether prompts and results can be deleted or exported on demand.

AI Visibility Tools Compared

This comparison table is based on primary-source research: direct review of each vendor's pricing page, product documentation, and marketing materials as of April 2026.

All pricing information reflects publicly available sources as of April 2026. Third-party reported prices may have changed.

| Tool | Methodology | Providers | Entry Price | Deployment | BYO-API |

|---|---|---|---|---|---|

| Popsight | 95% CI, Wilson Score, configurable samples, brand-seeded prompt separation | 4 (ChatGPT, Claude, Gemini, Perplexity) | Annual license (public pricing page, April 2026) | Desktop (local) | Yes |

| Gumshoe | 95% CI, 800 observations per run default, published | 7+ (ChatGPT, Claude, Gemini, Perplexity, Grok, DeepSeek, AI Overviews) | Pay-as-you-go $0.10/conversation (public pricing page, April 2026) | Cloud SaaS | No |

| Profound | Not published | 9 (ChatGPT, Claude, Gemini, Perplexity, Grok, DeepSeek, Meta AI, Copilot, AI Overviews) | ~$399-499/mo (third-party reported, vendor does not publish) | Cloud SaaS | No |

| Peec AI | Not published | 7 (ChatGPT, Gemini, Perplexity, Copilot, Grok, AI Overviews, AI Mode) | ~€85-90/mo (third-party reported, vendor does not publish) | Cloud SaaS | No |

| Semrush AI Toolkit | Not published | 4 (ChatGPT, Gemini, Perplexity, AI Overviews) | $99/mo standalone (public pricing page, April 2026) | Cloud SaaS | No |

| SE Ranking | Not published | 5 via SE Visible (ChatGPT, Perplexity, Gemini, Google AI Overviews, AI Mode); older AI Tracker materials list narrower coverage | From about €63-89/mo depending on billing and region (public pricing page, April 2026) | Cloud SaaS | No |

| OtterlyAI | Not published | 4-6 (ChatGPT, Perplexity, Copilot, AI Overviews; Gemini, AI Mode as paid add-ons) | $29/mo (public pricing page, April 2026) | Cloud SaaS | No |

| Athena | Not published | 8 (ChatGPT, Claude, Gemini, Perplexity, Copilot, Grok, AI Overviews, AI Mode) | ~$95-295/mo (estimated from reviews, unverified) | Cloud SaaS | No |

| Trakkr | Not published | 8 (ChatGPT, Claude, Gemini, Perplexity, Grok, DeepSeek, Meta AI, Copilot) | Free tier ($0) (public pricing page, April 2026) | Cloud SaaS | No |

The methodology column is the headline. Among the eight competitors in this table, only Gumshoe publicly documents confidence intervals in the materials we reviewed. Their methodology blog post documents 800 observations per run default, ±5pp at 95% CI, with multi-run precision tightening to ±2.5pp. For the other seven, public materials emphasize point-estimate "share of voice," but we found no public documentation of sample sizes or variance handling.

Profound's own blog acknowledges variability ("LLM tools don't give the same answer every time to every user"), but we found no public methodology explaining how Profound controls for that variability.

Among the eight competitors in our main comparison, we found no public evidence of BYO-API keys, desktop deployment, or annual licensing instead of monthly subscription.

Quick verdict by use case

| Use case | Best fit |

|---|---|

| Public methodology (among competitors) | Gumshoe |

| Broadest provider coverage / enterprise workflows | Profound |

| European/multi-country tracking | Peec AI |

| Free tier | Trakkr |

| Existing SEO-suite workflow | Semrush or SE Ranking |

| Local/BYO-API setup | Popsight |

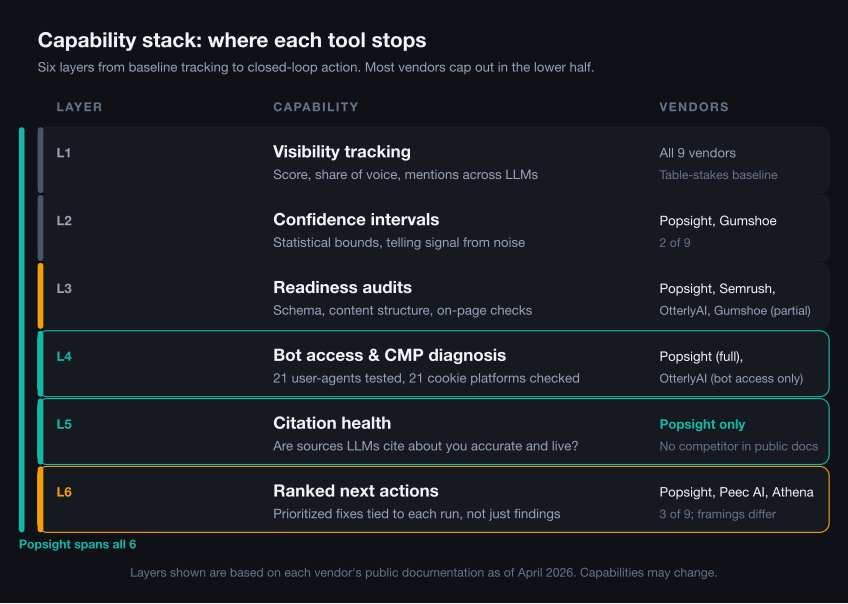

Beyond Visibility Tracking

The table above compares measurement basics. But a low visibility score raises a follow-up question: why?

This table compares operational capabilities beyond core tracking. Entries marked "(public docs)" come from vendor-owned documentation. "(Third-party)" means the claim appears in reviews but we could not find vendor-owned confirmation. "Not publicly documented" means we found no public evidence in vendor websites, documentation, or third-party reviews. Absence of documentation does not mean absence of the feature.

Competitor discovery means whether the tool can suggest competitors or adjacent brands from AI responses or citation domains, rather than requiring the user to enter every competitor manually. "Manual" means you must specify competitors. "Semi-automatic" means the tool suggests competitors but you select them. "Auto-discovery" means the tool surfaces competitors from AI responses without prior configuration.

| Tool | Readiness Audit | Bot Access Checks | Citation Health | Competitor Discovery |

|---|---|---|---|---|

| Popsight | In-app: 50+ checks across crawler access, renderability, schema, content clarity (public feature page forthcoming) | In-app: 21 AI crawler user-agents | In-app: validates cited URLs for redirects, noindex, HTTP errors | Observed actors from citation domains |

| Gumshoe | AIO Score (AI optimization score), Page Audit: schema, JSON-LD, metadata, heading structure (public docs) | General robots.txt; no AI-specific crawler validation documented | Not publicly documented | Hybrid: AI suggests, user configures (up to 30) |

| Profound | Partial: Agent Analytics via CDN logs (schema, renderability, canonicals) | Not publicly documented | Not publicly documented | No public auto-discovery documentation found |

| Peec AI | Not publicly documented | Not publicly documented | Not publicly documented | Partial auto-discovery (2+ mentions threshold) |

| Semrush | AI Search Checks in Site Audit up to 100 pages (public docs); robots.txt/llms.txt/schema details reported by third parties | Reported: 8+ AI crawlers (third-party) | Not publicly documented | Semi-automatic (suggests, manual selection) |

| SE Ranking | Not publicly documented (general SEO audit only) | Adjacent: standalone robots.txt tester includes AI crawlers, not integrated into tracker | Not publicly documented | No public auto-discovery documentation found (up to 5 competitors) |

| OtterlyAI | GEO Audit: Crawlability + Content Checker, 20+ factors (public docs) | Yes: robots.txt parsing + AI Crawler Simulation (public docs) | Not publicly documented | No public auto-discovery documentation found |

| Athena | Schema/content gap analysis mentioned in public materials | Mentioned, not enough public detail to evaluate | Not publicly documented | Not enough public detail to evaluate |

| Trakkr | Partial: educational content, recommends third-party tools | Not publicly documented (educational checklists only) | Not publicly documented | Yes: auto (2+ co-mentions) + manual |

llms.txt is an emerging file format some teams use to guide AI crawlers, similar in concept to robots.txt but not yet a widely adopted standard.

Several tools now offer some form of readiness audit. Popsight combines local-first measurement, statistical validity controls, AI-crawler and CMP diagnostics, cited-URL health checks, and post-run action recommendations in one workflow.

Among the tools we reviewed, we found no public documentation of cited-URL health checks comparable to Popsight's in-app HTTP validation of URLs AI systems cite from your domain: redirects, noindex/nosnippet headers, HTTP errors, and stale page signals. This is a common failure mode: ChatGPT cites your pricing page, but that page carries an X-Robots-Tag: noindex header from a Vercel preview promotion.

Tool-by-Tool Breakdown

Profound

Their blog acknowledges variability: "LLM tools don't give the same answer every time to every user." We found no public documentation explaining how Profound controls for that variability. Public materials show the number, but we found no public sample-size or confidence-interval disclosure.

Profound has raised $58.5M from Khosla, Kleiner Perkins, and Sequoia (per company materials). They track 9 engines including Claude, Grok, DeepSeek, and Meta AI. Named-brand customers include Ramp, US Bank, Indeed, MongoDB, and DocuSign (per Profound website).

Pricing requires a sales conversation; no public price list. Third-party reviews (as of April 2026) report entry tiers at $399-499/month for limited prompts. Enterprise pricing is often reported around $2,000-$5,000+ per month. No free trial. No self-serve signup. SOC 2 and SSO for enterprise. Google Analytics integration.

Profound is a natural shortlist candidate for buyers who prioritize provider coverage, integrations, and enterprise support.

Peec AI

Berlin-based, which may appeal to European buyers with GDPR and data-residency concerns.

Peec has raised $29M through Series A (Antler, 20VC, Singular). Seven providers including Grok. Multi-country tracking across European markets. 1,300+ customers and reportedly adding 300+ per month.

Pricing is displayed after completing a "Get Started" signup flow rather than on a public pricing page. Third-party reviews (as of April 2026) suggest Starter around €85-90/month, Pro at €199-205/month, Advanced at €425/month. No Claude at standard tier. No published methodology.

Semrush AI Visibility Toolkit

One of the most established SEO platforms ships one of the narrower provider lists.

Four engines: ChatGPT, Gemini, Perplexity, AI Overviews. No Claude. No Grok. No DeepSeek at standard tier. No published methodology.

$99/month standalone for 25 prompts, or bundled with Semrush One from $199/month. No free trial for the standalone toolkit. Existing Semrush customers get CSV exports and no context-switching. If you're choosing a tool from scratch, the narrow coverage is hard to justify.

SE Ranking

SE Ranking's public materials vary by product naming and package. SE Visible currently lists Google AI Mode, AI Overviews, Gemini, Perplexity, and ChatGPT. Older AI tracker references may show narrower coverage (4 engines with Claude and Gemini "roadmap only").

From about €63-89/month add-on depending on billing and region (requires a core plan at €87-188/month). Free trial without credit card. SMBs already using SE Ranking can add basic AI tracking without switching platforms. No published methodology.

OtterlyAI

OtterlyAI is bootstrapped, which makes its transparent pricing and partnerships notable in a category with heavily funded competitors.

Gartner named them a Cool Vendor in 2025. They have a technology partnership with Semrush and 25,000+ marketers on platform.

OtterlyAI lists Lite at $29/month (15 prompts). Public and third-party pages conflict on higher tiers, with Standard shown around $160-189/month (100 prompts) and Premium around $422-489/month (400 prompts). Free trial available. Looker Studio integration. Agency partner program. Claude, Grok, and DeepSeek not available at base tier. Gemini and AI Mode are paid add-ons. No published methodology.

Athena

Is it $95/month or $295/month? Sources report both. Enterprise is custom. Buyers should confirm pricing directly before comparing Athena against fixed-price alternatives.

Founders from Google Search and DeepMind. $2.2M raised via Y Combinator. Eight providers including Claude and Grok.

Marketing emphasizes case-study results ("6X Share of Voice Lift") but no published methodology explains how those numbers were calculated.

Trakkr

$0/forever. One brand. Five prompts. Six models. Not a trial. An actual free tier.

Growth runs $79/month. Scale at $399/month adds white-label portal and API access for agencies. Eight providers total. MCP server support at Scale tier for teams connecting the tool into agent workflows.

No published methodology. Trakkr publishes competitor comparison content, so treat those reviews as vendor-authored. Agencies can evaluate using the free tier before committing.

Gumshoe

The only competitor besides Popsight that publicly stakes a methodological claim: 95% CI, ±5pp default, 800 observations per run, ±2.5pp on multi-run. Persona-driven sampling design.

Seven+ providers. Free baseline report to evaluate. Then pay-as-you-go at $0.10 per conversation.

The tradeoffs differ from Popsight: cloud instead of desktop, vendor-proxy instead of BYO-API, metered instead of annual. Per-conversation pricing can add up for high-volume tracking. But the statistical rigor is there.

Popsight

Popsight is our product. Treat this section as vendor-authored.

The core difference is that Popsight combines visibility measurement with an action-oriented workflow. It tracks ChatGPT, Claude, Gemini, and Perplexity using BYO-API keys, computes Wilson Score confidence intervals, and separates unbranded visibility prompts from brand-seeded perception prompts (so "What do you know about Acme?" doesn't inflate your visibility score).

The in-app AI Readiness scan covers:

- Crawler access and bot blocking (21 AI crawler user-agents)

- JavaScript renderability

- Technical hygiene

- Structured data validation across 17 schema types

- Multi-page crawl and link-depth issues

- Content clarity for retrieval

It also fingerprints 21 consent platforms and compares Chrome vs GPTBot fetches to detect CMP suppression, and validates cited URLs for redirects, noindex headers, and HTTP errors.

After every run, the Quick Wins engine emits ranked next actions without additional LLM cost: prompts where competitors dominate and you don't appear, prompts where you're mentioned but never rank first, citation gaps where competitors get links and you don't. Observed Actors surfaces untracked competitors and adjacent brands that AI products mention alongside you but aren't in your tracked competitor list.

The tradeoff: Popsight has narrower provider coverage (4 vs. 8-9 for Profound, Athena, or Trakkr). If you need the broadest provider coverage or enterprise team workflows, those tools may fit better. If you want local-first measurement plus concrete fix recommendations from a single interface, Popsight is built for that workflow.

Other Tools to Know

A few other players appeared in our research but didn't make the main comparison due to limited public information or narrower positioning:

Scrunch AI (1,900 branded search volume) is an adjacent player in the influencer and social analytics space, with some AI visibility features.

Amplitude (the product analytics company) has an "AI visibility" feature appearing in search results, but it's oriented toward product analytics rather than brand monitoring.

Ahrefs has announced AI visibility features but the product documentation is thin compared to the vendors above.

Daydream positions itself as "SEO agents" combined with expert services. More of a hybrid SaaS-plus-services play than a pure tooling company.

WordLift and xFunnel AI appear in long-tail searches but have limited public information about AI visibility capabilities.

BrandRank.AI is an enterprise-focused tool from Pete Blackshaw (ex-P&G/Nestlé digital exec). Seven providers including DeepSeek and Meta AI. Customer logos include Nestlé, P&G, and Fifth Third Bank (per BrandRank.AI website). Pricing not disclosed publicly. Limited public information about product capabilities. No published methodology.

Head-to-Head: Peec AI vs Profound

This section is included because Peec and Profound are the two most-funded enterprise-focused vendors in the comparison. They have raised the most capital ($29M and $58.5M respectively) and are the most direct competitors in the enterprise segment.

Provider coverage: Profound wins with 9 engines vs Peec's 7. Profound has Claude; Peec does not at standard tier.

Pricing transparency: Neither wins. Both hide prices behind sales calls. Third-party reports suggest Profound starts higher ($399-499/month vs ~€85-90/month for Peec Starter).

Methodology: Neither publishes confidence intervals or sample sizes.

Geography: Peec is Berlin-based with EU hosting. Profound is US-based. This matters for GDPR and data residency requirements.

Market position: Profound targets US enterprise with Fortune 500 logos. Peec targets European brands with multi-country tracking.

Free vs Paid AI Visibility Checkers

Not ready to commit to a paid tool? Here's what actually exists at the free tier.

Trakkr Free ($0/forever): 1 brand, 5 prompts, 6 AI models, 1 article per month. This is the only true freemium in the market. Limited, but real.

OtterlyAI Free Trial: Time-limited trial for new users. Converts to paid.

Gumshoe Free Baseline: One free report to evaluate the product. Pay-as-you-go after.

Popsight 14-Day Trial: Full-featured trial, all providers, no credit card required. BYO-API means you pay only provider costs during trial.

SE Ranking Free Trial: Free trial without credit card for the core SEO platform. AI add-on included.

Manual spot-checks: You can always run prompts yourself in ChatGPT, Claude, and Perplexity and record the results. This is free, but a handful of manual checks cannot separate signal from run-to-run variance (see why AI needs confidence intervals).

The Buyer Question

The buyer question is not just "which tools track the most engines?" It is "which numbers can I trust?" If a vendor cannot tell you sample size, confidence interval, and how they handle run-to-run variability, treat the score as directional at best.

Appendix: Methodology Audit

This table documents what we found (and did not find) when searching for published statistical methodology across all vendors. The central claim of this article is that most AI visibility tools do not publicly document confidence intervals or sample sizes. Absence-of-evidence claims require showing your work.

| Vendor | Pages reviewed | Methodology found? | Notes |

|---|---|---|---|

| Popsight | In-app product, Pricing | Yes | 95% CI using Wilson Score interval; configurable sample sizes; methodology documented here; |

| Gumshoe | Methodology blog, Pricing | Yes | 95% CI, ±5pp default, 800 observations per run, ±2.5pp on multi-run |

| Profound | Blog, Help Center, Product | No | Blog acknowledges variability but no sample sizes or CIs published |

| Peec AI | Website, Docs | No | No methodology documentation found |

| Semrush AI Toolkit | AI Visibility Toolkit, Pricing | No | No methodology documentation found |

| SE Ranking | AI Visibility Tracker, Pricing | No | No methodology documentation found |

| OtterlyAI | Pricing, Help | No | No methodology documentation found |

| Athena | Product, YC Profile | No | Case studies cite lift metrics without methodology |

| Trakkr | Pricing, Features | No | No methodology documentation found |

Review date: April 2026. If you represent a vendor named in this comparison and believe we have missed or mischaracterized your methodology documentation, please contact us and we will review and update this article.

Appendix: Operational Capability Audit

This table documents what we found (and did not find) when researching operational capabilities beyond core visibility tracking. Claims without direct vendor documentation are marked accordingly.

| Vendor | Sources Reviewed | Readiness Audit | Bot Checks | Citation Health | Competitor Discovery |

|---|---|---|---|---|---|

| Popsight | In-app product | Yes (in-app) | Yes (in-app) | Yes (in-app) | Observed actors (in-app) |

| Gumshoe | llminfo.md, pricing | AIO Score, Page Audit (public docs) | General robots.txt only | Not documented | Hybrid (public docs) |

| Profound | Blog, Help, Product | Partial via Agent Analytics | Not documented | Not documented | Not documented |

| Peec AI | Website, Docs | Not documented | Not documented | Not documented | 2+ mentions threshold (docs) |

| Semrush | AI Toolkit KB, third-party | AI-search health (public docs + third-party) | Reported: 8+ crawlers | Not documented | Semi-automatic |

| SE Ranking | AI Tracker, Pricing | Not documented | Standalone tool only | Not documented | Not documented |

| OtterlyAI | GEO Audit, Crawlability | GEO Audit (public docs) | Yes (public docs) | Not documented | Not documented |

| Athena | Product, YC | Mentioned in public materials | Mentioned, needs verification | Not documented | Not enough detail to evaluate |

| Trakkr | Pricing, Features | Educational only | Not documented | Not documented | Auto + manual (docs) |

Review date: April 2026.

Limitations

We reviewed public materials only. Some vendors may disclose methodology, sample sizes, or operational capabilities in sales calls, customer documentation, or in-app screens that are not publicly accessible. We mark those as "not publicly documented," not absent.

All third-party product names and trademarks mentioned are the property of their respective owners.

Popsight is our entry in this category: desktop deployment, BYO-API keys, Wilson Score confidence intervals, and an in-app AI Readiness scan that tells you what to fix. If those tradeoffs match your needs, try the 14-day trial.