One Question, Many Answers

Ask ChatGPT to recommend a CRM for your small business. Write down what it says. Now ask again. Same words, same prompt, same everything.

You will get a different list.

This is not a glitch. It is how AI works. And if you are trying to understand what AI says about your brand, it is the single most important thing to wrap your head around.

A 2026 study by SparkToro put hard numbers on this. 600 volunteers ran the same prompts through ChatGPT, Claude, and Google AI a combined 2,961 times. The finding: there was less than a 1-in-100 chance that ChatGPT returned the same list of brands on any two runs. For getting the same list in the same order, it was closer to 1 in 1,000.

That is not measurement error. That is the medium itself. AI responses are inherently variable, and anyone claiming to measure AI brand visibility with a handful of queries is measuring noise.

This Is Not a Technical Problem. It Is a Math Problem.

You might be thinking: surely there is a setting, a configuration, some way to make AI give consistent answers?

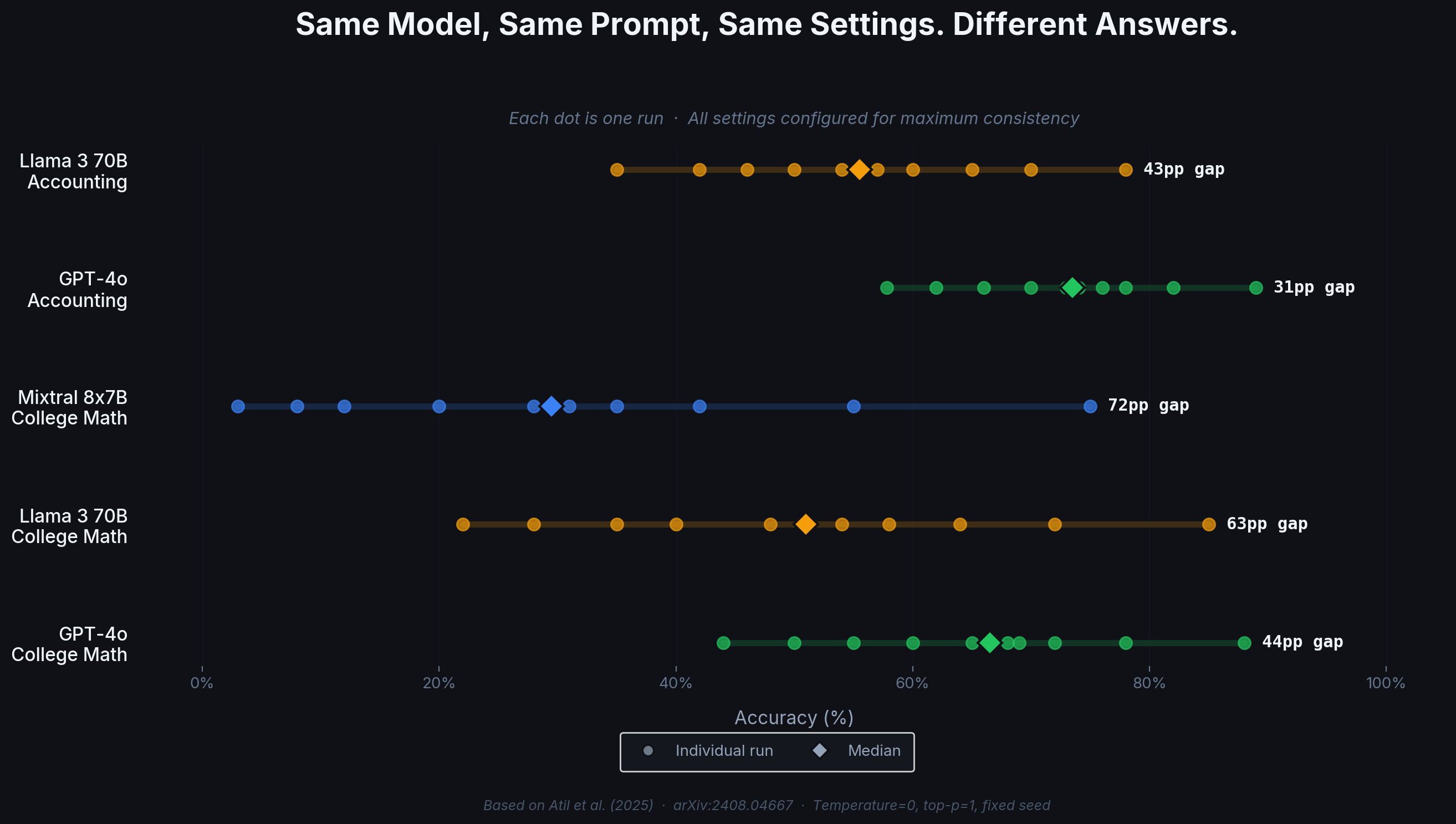

There is not. Researchers at Penn State tested this directly in 2025. They ran five major AI models with every setting configured for maximum consistency and repeated the exact same inputs 10 times per model.

The results:

One model swung from 88% accuracy to 44% across those 10 runs. Another went from 75% to 3%. Same inputs, same configuration, wildly different outputs.

The cause is fundamental: AI systems process your query alongside thousands of others on shared hardware, and the exact order in which calculations happen varies from run to run. The AI providers themselves acknowledge this. Anthropic's API documentation states plainly that results "will not be fully deterministic."

This is not going away. The Penn State researchers spoke with industry insiders who confirmed that the variability is "perhaps essential to the efficient use of compute resources." Eliminating it would mean slower and more expensive AI for everyone.

So the question is not "how do we make AI give consistent answers." The question is: how do we make reliable measurements from inherently inconsistent data?

That question has been answered. Pollsters, medical researchers, and quality engineers solved it decades ago. The answer is sampling and confidence intervals.

The Polling Analogy

Imagine you want to know which candidate voters prefer in an election. You would not ask one person and call it a day. You would not ask five people. You would survey hundreds, and then use statistics to estimate the true preference, along with a margin of error.

AI brand visibility works the same way.

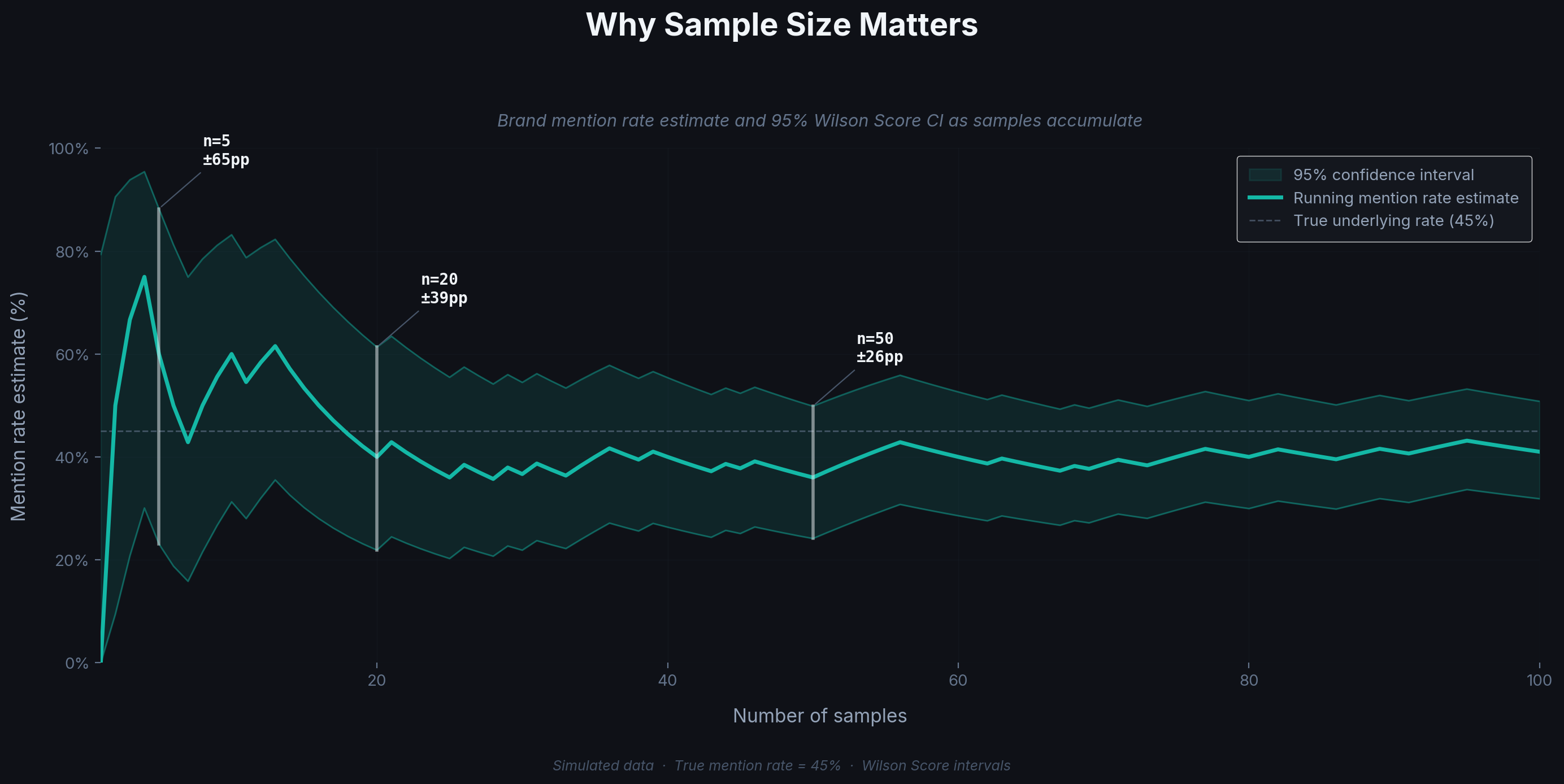

Ask ChatGPT about your product category once, and you get one random draw. Ask 20 times and count how often your brand appears. That percentage is your visibility rate, and the range of uncertainty around it is your confidence interval.

With 5 samples, the estimate is all over the place: your brand might appear 80% of the time or 20%, and you cannot tell which is closer to reality. At 20 samples, the estimate settles down and the confidence interval narrows enough to be useful. At 50, you have a tight, reliable measurement.

The confidence interval is the honest part. It tells you: "Given what we have observed, the true value is most likely between X and Y." A wide interval means you need more data. A narrow one means you can trust the number.

When Is a Difference Real?

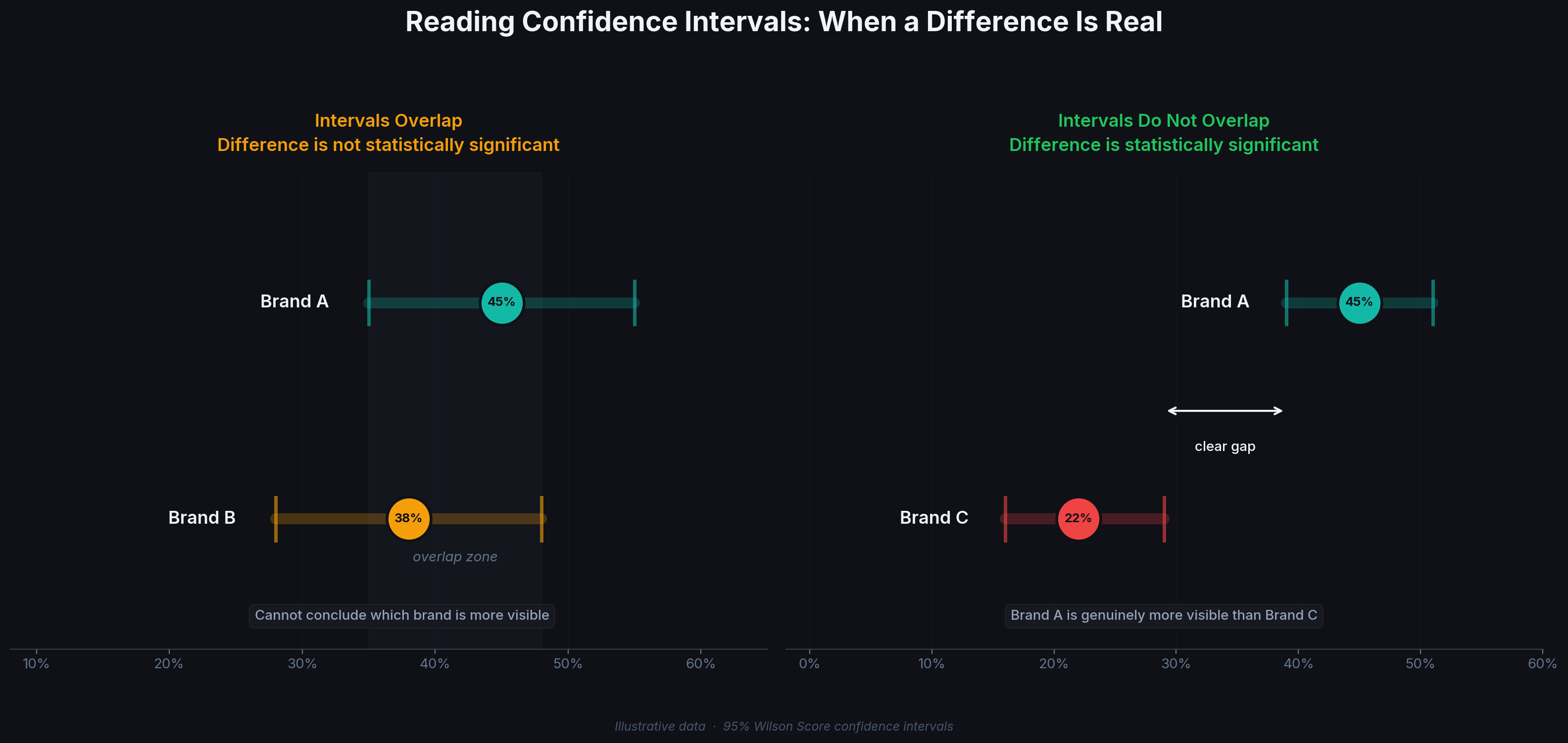

This is where confidence intervals earn their keep. Suppose you are comparing how often AI mentions your brand versus a competitor.

Scenario 1: You measure your brand at 45% and a competitor at 38%. Looks like you are ahead. But the confidence intervals overlap:

- Your brand: 45%, interval [35%, 55%]

- Competitor: 38%, interval [28%, 48%]

The true values could easily be identical. You cannot tell these apart with this data. Declaring victory here would be premature.

Scenario 2: You measure your brand at 45% and a different competitor at 22%. The intervals do not overlap:

- Your brand: 45%, interval [39%, 51%]

- Competitor: 22%, interval [16%, 29%]

Now the gap is real. Your brand is genuinely more visible, and you can make decisions based on that.

Without confidence intervals, both scenarios look like "we are ahead." With them, you can see that only one of those leads is meaningful. That distinction is the difference between data and guesswork.

How Many Samples Do You Need?

Fewer than you might think.

Popsight uses Wilson Score confidence intervals, a statistical method designed for exactly this type of data: measuring how often something happens (like a brand appearing in an AI response). Unlike simpler methods, Wilson Score intervals work well at small sample sizes and handle extreme cases (a brand that shows up 0% or 100% of the time) without breaking.

For most brand visibility questions, 20 samples per query per provider is enough to surface meaningful trends. The intervals will be wider than with 50 or 100 samples, but they will be honest about that uncertainty.

One more detail that matters: the Penn State researchers tested whether AI outputs follow a bell curve (the familiar normal distribution that most basic statistics assume). They do not. The variation is lopsided and unpredictable. This means simpler statistical methods that assume a bell curve will give you misleading results. Wilson Score intervals do not make that assumption, which is one reason we chose them.

Four Layers of Variation

When you measure AI brand visibility, four independent sources of variation stack on top of each other:

1. Response variability. The same AI model, given the exact same prompt, produces different answers each time. This is what the Penn State study documented. It is built into the infrastructure.

2. Phrasing sensitivity. "What is the best CRM?" and "Which CRM do you recommend for a small business?" produce different brand lists even from the same model in the same minute.

3. Provider disagreement. ChatGPT, Claude, Gemini, and Perplexity each have their own training data and their own biases. In our own study of 3,837 AI queries across 16 brands, the four major providers agreed on their top recommendation only about a third of the time.

4. User personalization. Consumer AI products like ChatGPT remember your preferences. If you have told ChatGPT you prefer Notion, it will weight Notion more heavily in future recommendations. This is similar to how Google has personalized search results for over a decade: the same query produces different results for different users based on their history.

Popsight measures layers 1 through 3 by querying AI providers directly through their APIs. API connections are stateless: every query starts fresh with no user history, no memory, and no personalization. This gives you the baseline visibility for your brand: the recommendation a new user with no preferences would see. It is the layer you can track over time, compare across competitors, and actually influence through your content and positioning.

Layer 4, user personalization, sits on top of that baseline. It varies from person to person and is not something any tool can measure comprehensively. But the baseline matters, for the same reason that SEO professionals still track "clean" search rankings even though every Google user sees personalized results: the baseline is the controllable, comparable foundation that personalization shifts away from.

Any serious approach to generative engine optimization needs to account for all of this. That means: multiple samples per query, multiple query phrasings, multiple providers, confidence intervals on every number, and an honest understanding of what the numbers represent.

What This Means in Practice

If you are measuring or thinking about measuring AI brand visibility, here is what this research points to:

Single queries are not data. Any workflow that involves asking an AI a question once and recording the answer as your brand's AI presence is producing noise. This includes manual spot-checks, one-off competitor screenshots, and live demos where someone types a query on stage.

Percentages without ranges are incomplete. If a tool or a report shows you "your brand is mentioned 40% of the time" without a confidence interval, you are missing the most important part: how much to trust that number. A move from 40% to 35% might be normal variation. Or it might signal a real decline. The interval tells you which.

Measuring across providers matters. A brand can be the top recommendation on ChatGPT and completely absent on Gemini. Checking one provider tells you about that provider, not about your brand's AI visibility in general.

Variation is normal. The goal of measurement is not to eliminate variation. It is to collect enough data to see through it and to be transparent about what you know and what you do not.

Popsight calculates 95% Wilson Score confidence intervals for every metric, across every provider. Download free and run your first analysis in minutes. 14-day trial, all features, no credit card.